Robust Methods for Statistical Inference, Data Integrity and Interference Management (RODIN)

Acronym: RODIN

Code: PID2019-105717RB-C22

Funder: Spanish Government - AGENCIA ESTATAL DE INVESTIGACIÓN - MINISTERIO DE CIENCIA E INNOVACIÓN

Start date: 2020 June 1st

End date: 2023 December 31st

Keywords: Interference management, Statistical inference, Data integrity, Machine Learning security, Robustness, 5G network capacity, NOMA, opportunistic communications, full-duplex, anomaly detection

SPCOM Participants: Jordi Borràs Pino, Ferran De Cabrera Estanyol, Meritxell Lamarca Orozco, Aniol Martí, Francesc Molina Oliveras, Francesc Rey Micolau, Jaume Riba Sagarra, Josep Sala Alvarez, Gregori Vazquez Grau, Marc Vilà, N. Javier Villares Piera

SPCOM Principal Investigator: N. Javier Villares Piera

Partners: Universidad de Vigo, Universitat Politècnica de Catalunya

This work has been supported by the Spanish Ministry of Science and Innovation through project

RODIN (PID2019-105717RB-C22 / MCIN / AEI / 10.13039/501100011033)

Summary

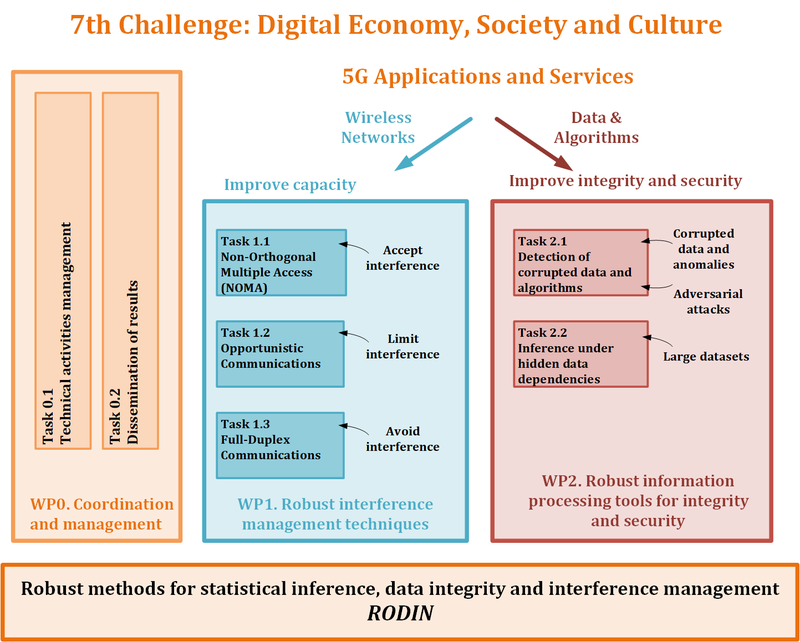

Future services will face significant challenges to dependably process the large volumes of data made available by next-generation communication networks. These, in turn, will strive to provide increasingly fast, reliable, instantaneous and ubiquitous connectivity, and managing the ensuing complexity of these systems will be no easy task. The coordinated project RODIN will contribute research to address various aspects of two of these challenges: interference management in wireless networks, and information processing under integrity and security threats. Regarding the first, given the limiting nature of interference in wireless systems, the topics to be considered include the optimization of massive multiple access practical communication systems (e.g. IoT) under realistic constraints, opportunistic communication to efficiently exploit idle resources in wireless systems, and self-interference cancellation in full-duplex transceivers with large antenna arrays. The second challenge stems from the vast amounts of data produced by IoT devices and other sources, likely affected by corrupted and missing values, from which to extract useful information; as well as the fact that the signal processing and machine learning tools to which these data are fed are prone to intentional manipulations and misappropriations. In this regard, the topics to investigate include anomaly detection in large dynamic datasets, countermeasure design for malicious attacks against machine learning based classifiers, IPR protection of deep neural networks by watermarking embedding during training, estimation of hidden dependencies across datasets based on information-theoretic tools, device fingerprinting for stabilized video sequences, and signal processing in the encrypted domain to preserve confidentiality.

Workplan

Publications

Project publications: https://futur.upc.edu/28881330

More information:

Send an email to: javier.villares@upc.edu

Share: